Chat-Based AI Image Generation: How Conversation Replaces Prompt Engineering

-

The rise of AI image generation has created a new skill requirement: prompt engineering. Users must learn specific syntax, parameter adjustments, and iterative refinement techniques to get desired results. This learning curve limits adoption among professionals who could benefit most from AI-generated visuals.

What if AI image tools worked like a conversation with a designer instead? You describe what you need, see the result, and refine through natural dialogue. This approach could remove the technical barrier and make AI image generation accessible to non-technical users.

The Prompt Engineering Problem

Current AI image generation tools require specialized knowledge. Midjourney users need to understand Discord commands and parameter syntax. DALL-E provides single-turn generation with limited refinement options. Even users familiar with AI concepts struggle to produce consistent, professional-quality results without investing time in learning prompt construction.

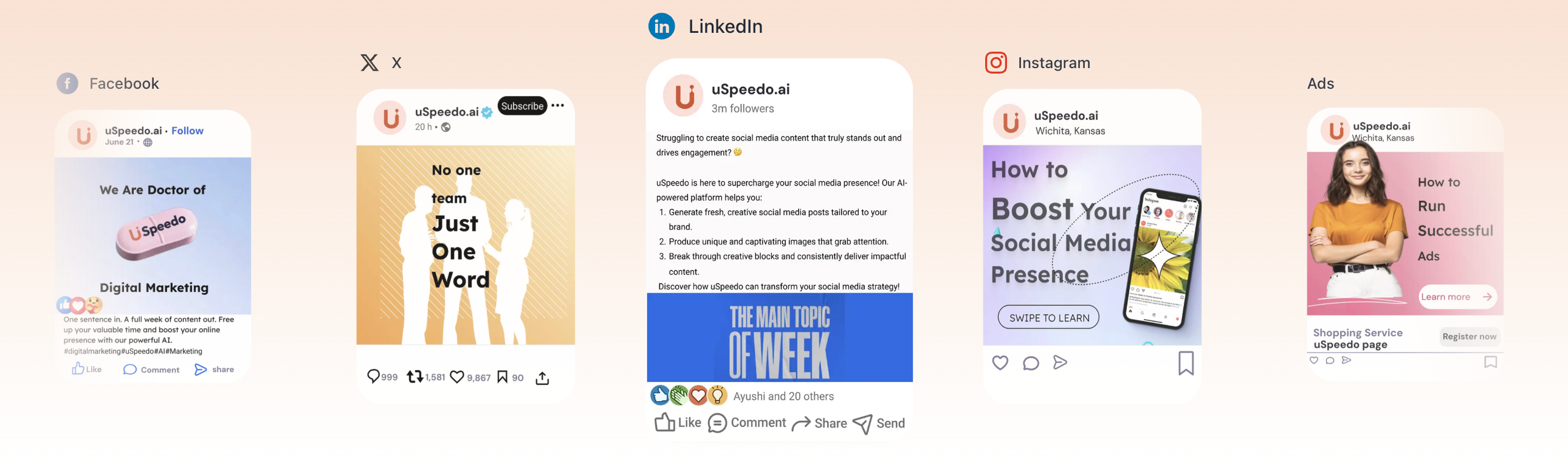

This barrier particularly affects professionals who need visual content but lack design backgrounds. E-commerce sellers, content creators, marketers, and educators often have clear visual ideas but no vocabulary to express them in AI prompt format. The gap between "I need a product photo with warm lighting" and "professional product photography, soft golden hour lighting, shallow depth of field, high resolution" represents a real obstacle to practical adoption.

Conversational Interface Approach

Banana AI, a platform built on Google's Nano Banana models, implements a chat-based approach to image generation. Users describe their needs in natural language, receive generated images, and request changes through continued conversation. The system maintains context across the conversation, allowing iterative refinement without restarting from scratch.

The technical implementation uses a workflow engine that processes user messages, manages generation state, and coordinates between multiple AI models. When a user requests an image, the system:

- Parses the natural language request

- Routes to an appropriate model (Nano Banana, Nano Banana 2, or Nano Banana Pro)

- Generates the image with specified parameters

- Returns the result with context preserved for follow-up requests

Users can switch between models mid-conversation. A typical workflow might start with Nano Banana for fast drafts at 5 credits per image, then switch to Nano Banana Pro for final output with better composition analysis. This multi-model approach balances cost, speed, and quality within a single session.

Key Technical Capabilities

Text Rendering in Images

One significant limitation of AI image generation has been text rendering. Generated text often appears garbled or unreadable, requiring post-processing in image editing software. Nano Banana Pro addresses this by rendering text accurately within generated images.

The capability works across multiple languages including English, Chinese, Japanese, and Korean. For use cases like marketing materials, product mockups, and educational diagrams, this eliminates the need for manual text overlay after generation. A YouTuber creating thumbnails can generate images with readable headlines directly. An e-commerce seller can produce product photos with visible brand names and labels.

4K Resolution Output

The platform supports generation up to 3840x2160 pixels. This resolution enables use cases that typical 1024px or 2048px AI-generated images cannot serve: large-format printing, packaging design, high-resolution hero images for websites. The technical implementation uses Google's Gemini models, which support higher resolution outputs compared to earlier image generation architectures.

Ultra-Wide Aspect Ratios

Nano Banana 2 supports 14 aspect ratios, including 8:1 and 1:8 ultra-wide formats. These dimensions enable compositions that standard AI tools cannot generate: web banners, panoramic landscapes, vertical infographics, social media story formats. For content platforms and marketing teams, these ratios reduce manual cropping and composition work.

Real-World Applications

E-Commerce Product Photography

Amazon sellers listing multiple products face a choice: hire photographers at $30-50 per product photo or invest time in learning photography themselves. AI-generated product photos offer a third option.

Testing on Banana AI shows that sellers listing 200 SKUs per quarter can generate product photos for approximately $40 total credit cost. This assumes using Nano Banana 2 at 7 credits per image, with occasional iterations for refinement. The cost reduction makes high-volume product photography economically feasible for small sellers who previously relied on smartphone photos or generic marketplace images.

Content Creation Workflows

YouTube creators report reducing thumbnail creation time from 2 hours in Photoshop to approximately 5 minutes with AI generation. The text rendering capability produces readable headlines without manual text overlay. Iterative refinement through conversation allows testing multiple variations quickly.

Social media managers managing multiple brand accounts can generate platform-specific aspect ratios from a single concept. One manager reported handling five brand accounts independently using AI-generated content, producing consistent visual style without design team support.

Educational Content Development

Teachers creating diagrams, timelines, and illustrations often lack design tools and skills. AI generation with accurate text labels enables quick production of educational materials. Multilingual text rendering supports the same diagram in English, Spanish, and Mandarin from a single prompt, useful for multilingual classrooms.

Comparison with Existing Tools

Midjourney

Midjourney produces high-quality images but requires Discord interaction and prompt engineering. Users comfortable with Discord workflows and willing to learn parameter syntax can achieve excellent results. The conversational approach suits users who want to describe needs in natural language rather than craft prompts.

DALL-E / ChatGPT Image Generation

DALL-E provides single-turn generation without multi-turn refinement. Users cannot iterate on results through continued conversation. ChatGPT's image generation offers conversational context but limits resolution and aspect ratio options. The Nano Banana models support higher resolution and more aspect ratios, though DALL-E may have broader style capabilities for some artistic use cases.

Adobe Firefly

Adobe Firefly integrates with Creative Cloud workflows, advantageous for users already in the Adobe ecosystem. It requires a subscription. Banana AI's credit-based pricing allows pay-per-use without subscription commitment, potentially more cost-effective for sporadic needs.

Technical Architecture

The platform runs on Cloudflare Workers for edge performance. Key technical components include:

- Next.js 15 with App Router for the frontend application

- Cloudflare D1 database for user data and credit management

- Cloudflare R2 for generated image storage

- Durable Objects for stateful workflow management

- Google Gemini API integration for image generation models

- Replicate API for additional model access

The workflow engine manages conversation state, model routing, credit allocation, and image generation pipelines. Each user session maintains context for multi-turn conversations, allowing the system to understand follow-up requests like "make the lighting warmer" without repeating the entire original prompt.

Pricing Model

The credit-based system charges per image rather than flat subscription:

- Nano Banana: 5 credits per image (approximately $0.10)

- Nano Banana 2: 7-14 credits depending on resolution (approximately $0.14-$0.28)

- Nano Banana Pro: 10-20 credits depending on resolution (approximately $0.20-$0.40)

Free tier provides 10 credits for testing. Paid tiers range from $9.90/month for 500 credits to $29.90/month for 2,000 credits, with yearly plans offering better per-credit rates.

For users generating images regularly, credit-based pricing can offer better value than subscriptions if usage varies month to month. Users pay only for what they generate, without committing to monthly fees during periods of lower activity.

Discussion Points for the Community

-

Prompt Engineering vs. Natural Language: Does conversational AI image generation lower the barrier enough for non-technical users, or does it simply shift the skill requirement to clear verbal description?

-

Text Rendering Quality: How important is accurate text rendering for practical AI image generation? Are current capabilities sufficient for professional use, or do they still require manual refinement?

-

Cost vs. Quality Trade-offs: Multi-model flexibility allows balancing cost and quality. What workflows make the most sense for different use cases?

-

Integration with Existing Tools: How should AI-generated images fit into existing design workflows? Do they replace traditional tools or supplement them?

-

Ethical Considerations: As AI image generation becomes more accessible, what responsibilities do platforms have regarding content authenticity, attribution, and misuse prevention?

Getting Started

The platform is accessible at bananai.net with a free tier for initial testing. No account required for the first 10 credits.

For developers interested in the technical implementation, the architecture uses open-source components (Next.js, Tailwind, Drizzle ORM) deployed to Cloudflare's edge network. The chat-based workflow demonstrates how AI image generation can integrate into conversational interfaces.

What are your experiences with AI image generation tools? Does the conversational approach address real pain points, or do you prefer direct prompt control?